Many clinical laboratories rapidly scaled testing capacity in response to the COVID-19 pandemic. But scaling throughput, whether for COVID-19 testing specifically or to grow the lab business in general, is not a simple process. Even the smallest inefficiencies can add up at scale, so labs need to do everything in their power to make their processes as efficient as possible.

For labs wanting to scale quickly and efficiently, we’ve identified five key factors related to lab software, particularly laboratory information management systems (LIMS), that can help you minimize the challenges and ensure a smoother ramp-up of throughput.

1. Identify requirements clearly

Your lab needs to have a clear understanding of the requirements you’re trying to meet to evaluate whether the software can handle the load. That means revisiting your full end-to-end workflow and asking all teams involved to review it to ensure there are no disconnects and that it is an accurate reflection of the current environment. We also recommend that lab requirements are evaluated by someone whose role is functional across all departments, including sample accessioning, wet lab, bioinformatics, and analysis, and who understands how the pieces fit together.

It’s also important to define the level of throughput you want to achieve. How many samples does your lab need to process per day? How might that number change over time? Look at the specimen lifecycle from ordering all the way to result delivery and make sure your throughput goals are consistently supported by all the software in your software stack.

2. Plan your infrastructure

IT infrastructure, from hosting servers to the software running on them, has huge performance implications for labs wanting to scale.

Hosting servers

Before beginning a throughput scaling project, we recommend researching whether a vendor-managed cloud-based server or a self-managed on-premise server will work best for achieving throughput goals with your LIMS. There are pros and cons to both options, so you’ll need to work with IT and bioinformatics team stakeholders to choose which one makes the most sense.

With vendor-managed servers, computing resources can be rapidly adjusted to accommodate specific performance demands, and you don’t need to be concerned about capital expenses. However, internet speed and quality of connection affect the user experience, and instrument integrations become more complicated and require special networking and security considerations and customizations.

While self-managed on-premise servers require the support of a team of in-house IT professionals who understand server management, they give you full control and the ability to configure infrastructure to meet performance goals. But you’ll need to ensure you have enough computing resources, including central processing (CPU) and memory (RAM), to handle processing at a larger scale. You should also consider whether you require additional dedicated servers to optimize performance.

If you choose to manage your own on-premise servers, we recommend distributing different application stacks on separate servers—for example, hosting the database on one server, applications on another, and reporting on a third. Another way to boost performance is to mirror the database so that you can point non-essential services to a replicated version. If you do this, operational reporting will not affect application server performance or consume database resources in the background.

Software systems

At the same time, it’s critical to consider the lab software. We recommend asking the following questions about your LIMS:

- Can your LIMS handle the capacity you’re aiming for or does it have a cap on throughput? What are the limits of active and historical samples? What is the recommended hardware for your LIMS for the throughput goal? It’s worth discussing these questions with your LIMS vendor to assess any potential limitations.

- Which version of the LIMS are you running? Does this version have the latest performance improvements? Does your current license enable the necessary capacity?

- Are there any known performance bottlenecks that you should avoid? How will your implementation be influenced by these bottlenecks? For example, is there an upper limit on queue sizes or limits to the length of lists that can be displayed? Do these limits impact the throughput you’re aiming for? How will you work around these limits?

- If your lab is using out-of-the-box integrations, have they been built with scale in mind? Will custom integrations be required to meet your throughput requirements?

Speaking of integrations, all integrations between the LIMS and other systems need to be optimized. For instance, look at whether or not you can transfer data in bulk or use batch processing to improve speed and efficiency. Certain types of integration (file-based, HL7-based, or API-based) may be better suited to transferring high volumes of data.

Consider the impact of regulatory requirements too. Auditing, monitoring, and encrypting data all have performance implications, so you should evaluate and resolve any bottlenecks.

3. Plan your protocols and how you will release upgrades

A further way to ensure efficiency is to streamline your lab’s protocols. Your objective should be to avoid any possible confusion for lab analysts, so they can work as quickly as possible. For example, look for any end-user constraints such as interfaces that make manual data entry cumbersome or require multiple software system logins.

For custom protocols, check that you have the resources to both build them and continuously improve them. Achieving ambitious throughput targets requires significant iteration and optimization. How many iterations per year can your lab support deployment-wise? Remember, this is a marathon, not a sprint.

It’s always a good idea to have a separate LIMS test environment for performing research and development (R&D). This lets you test changes to your lab processes before releasing them to your live production environment. You’ll need to consider how to move changes you’ve qualified through R&D to production, how much downtime the changes will mean for the production environment, and how you’ll migrate samples in flight into the workflows. Practice and plan protocol upgrades carefully to minimize downtime because turnaround time is crucial at a high throughput level.

4. Optimize workflow efficiency

Another way to increase efficiency when scaling throughput is to automate your wet lab techniques. For instance, consider using liquid handlers, and take into account the total number of instruments being used in the lab and whether you could use instrument integrations to replace manual procedures.

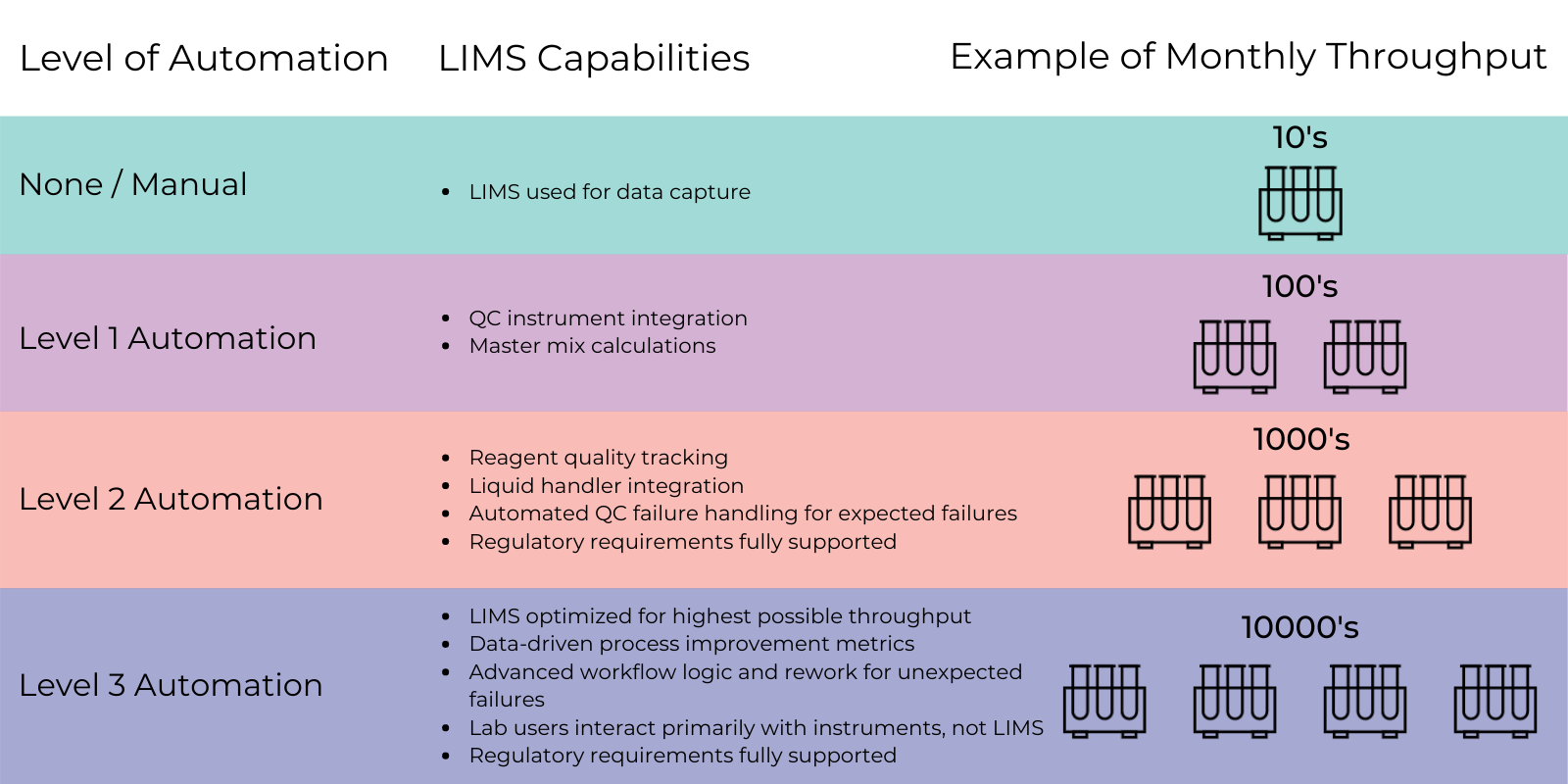

Evaluate your workflow to determine what level of automation you need at each point depending on your goal throughput (see the diagram below). One step might be fine with level 2 automation, but another step might need level 3 automation, and so on.

LIMS capabilities directly affect your ability to scale throughput.

The more you can minimize end-user time spent in the LIMS by streamlining interactions with the interface—such as by decreasing the number of mouse clicks a user must make to enter data—the more time your highly-trained staff has to perform more complex tasks.

For samples that have unexpected results, rework paths become more crucial when you’re operating on a bigger scale. A manual workaround of several clicks might be okay for one or two samples but could be a major cause of frustration and reduced speed across hundreds of samples. Some workarounds might need to involve programmatic logic. The main idea is to think through rework paths for each step of your workflow and make sure your lab is able to deal with these unexpected failures.

Lastly, for sample accessioning, which requires human intervention, consider using a custom-built app to support and streamline the process.

5. Monitor, maintain, and continuously improve over time

When it comes to monitoring and maintaining lab software, having the right people available is crucial, especially if your lab is working 24/7. In some cases, off-the-shelf server monitoring solutions can help you identify potential issues before they become critical. They can also help identify software bottlenecks, and you can leverage the operational reporting capabilities of your LIMS to identify processes that could be optimized. But it’s your team who will need to handle maintenance tickets or bugs that come up in your live production environment.

This is particularly important for those times when a bug causes the lab to halt operations. The quicker you can get your systems up and running again, the better for both morale and the bottom line. You’ll want to review your support escalation policies for each branch of your lab, too. And determine how much coverage is needed, how support is tiered, and which teams need to be on standby.

Critical operational software needs continuous care and feeding. In our experience, labs typically need 25% of their initial investment in software year-over-year to keep it up-to-date and maintained, which is a key part of keeping the lab running efficiently. On the topic of budget, it’s worth repeating here that achieving ambitious throughput targets requires significant iteration and optimization—which takes time and money.

Putting it all together

Planning your scaling initiative is critical for success. Labs willing to consider these five factors upfront will face fewer obstacles throughout their scaling project while at the same time incurring less long-term risk. Knowing that you have the supporting infrastructure, systems, and processes in place to be able to scale efficiently will reduce the pressure on your team now and down the road—and make future improvements simpler and less costly.

If you’d like to work with a consultant with experience in scaling lab throughput, contact us today. We can provide expert guidance and hands-on implementation help with designing and setting up the infrastructure, software systems, and automation you need. This will help you reduce your software maintenance burden and scale up as quickly and robustly as possible.